By Jessica Grinspoon, Cyabra Team

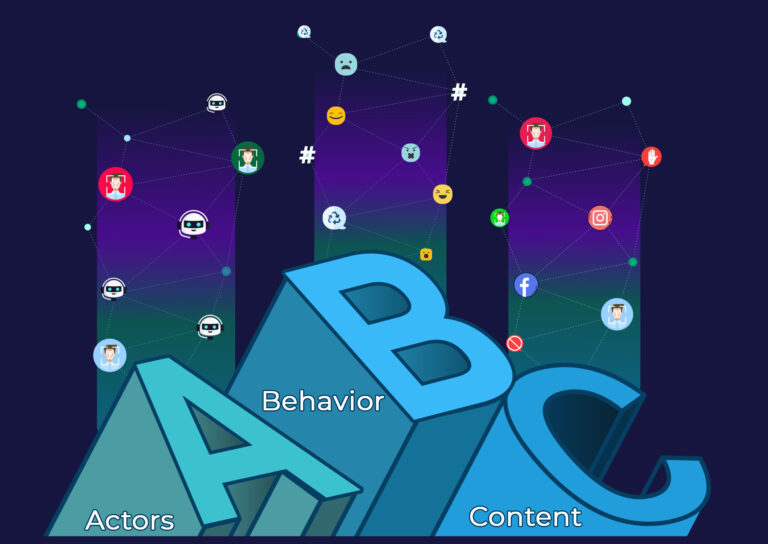

The media is saturated with falsehoods that prey on the internet’s ability to reach broad audiences. It has become a megaphone for actors to carry out disinformation campaigns. This direct channel of communication allows any user to broadcast unfiltered content. As a result, false narratives and fabricated stories can disguise themselves as fact in text, sound, and visuals.

Creating and Circulating Content

It is essential to distinguish the differences between all content types because not all manipulated content is ‘fake’. Genuine photographs and videos can be resurfaced and used as evidence for recent events. For example, an image from Vietnam, captured in 2007, was re-circulated in 2015 depicting the aftermath of an earthquake in Nepal. Genuine quotes and statistics can also be pulled from interviews and used as headlines to disseminate information taken out of context.

Yellow Journalism is an American term used in the 1980’s to describe illegitimate news. It primarily emphasized sensationalism over facts. A specific technique that remains evident in modern journalism are fabricated headlines and social media captions designed to grab attention and evoke psychological responses. The catch is that the fabrications are often not backed up by the text inside. The hope is that viewers will repost and spread the content, before actually reading it. This chain reaction increases the information’s virality despite how truthful it may be.

As we’ve previously learned, bots are typical destruction tools created by humans to generate content automatically. The tireless lines of codes give specific messages more visibility and advance disinformation agendas at alarming rates.

Editing Content

Unlike computer-generated text, disinformation can disguise itself in images and videos, making it more difficult for someone to distinguish fact from fiction.

Deepfakes are another form of warfare created to manipulate visual and audio content. Deepfake technology can create and alter photos and videos of people saying and doing things they did not actually say or do.

Deepfakes are commonly used to disrupt society’s perception of political figures. In 2019, a manipulated video altered U.S. House Speaker Nancy Pelosi’s speech to make her appear drunk and taint her credibility. With access to high-tech computer software, anyone can edit visuals and manipulate sound, furthering the difficulty of distinguishing real from fake.

In contrast to increasingly realistic, AI-generated deepfakes, shallow fakes are generally real images and videos that someone has relabeled to be taken out of context. Memes are a kind of shallow fake that are extremely effective in altering public perceptions.

The Aftermath

Whether it is bending the truth or propagating outright lies to inflame social conflict, taint a company’s image, disrupt political elections, or influence an individual’s perception, all manipulated information has one thing in common – destruction.

If parents withhold vaccinations from their children based on a mistaken belief, public health suffers. If citizens vote for a candidate based on fabricated stories and manipulated videos, a country’s political agenda becomes skewed. In some cases, disinformation induces physical destruction. The infamous “Pizzagate” incident caused a man to shoot up a pizzeria falsely linked to human trafficking. This piece of fiction was created by far-right communities designed to harm Clinton’s campaign.

After learning the various ways one can manipulate the media and analyzing its effect on societal structures, I’m sure you are wondering how we can diminish this destruction. How is it humanly possible to decipher fact from fiction? In the next article, I will explain how you can take part in flattening the disinformation curve.

Disinformation For Beginners series includes:

- Part 1: Information Types: What is the difference?

- Part 2: Who is Manipulating the Media?

- Part 3: Are You Being Manipulated?

- Part 4: Flattening the Disinformation Curve & Cyabra’s Disinformation Term Log