Where there are elections, there are bots.

This statement isn’t new, but in recent years, Cyabra uncovered many cases in which election-meddling bots have become a growing issue, which we can no longer ignore as a society. It’s not just the sheer vast number of fake profiles taking part in social discourse. What should really bother us is the fact that those bots just keep getting better and better at appearing authentic: they don’t “just” sound human – they sound opinionated, passionate, involved, and engaged – everything we expect from authentic profiles.

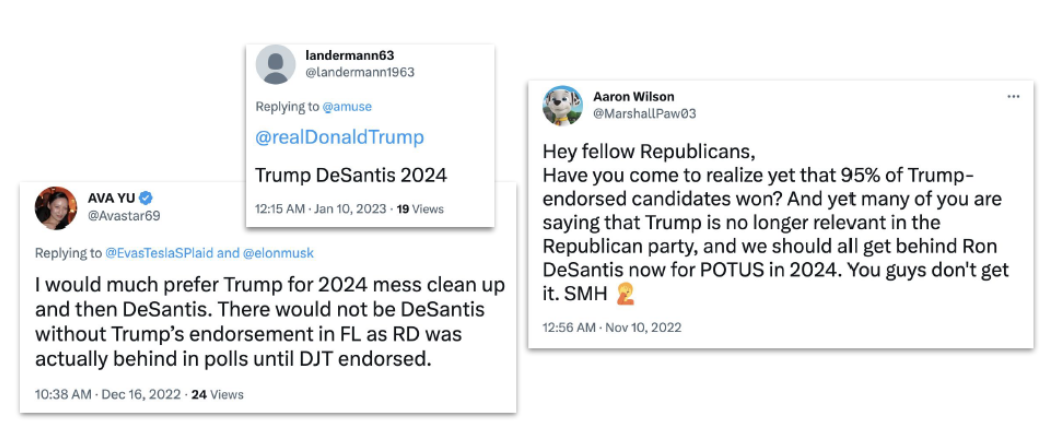

Check out the profiles below, for example. Two of them might look real, one even has the blue Twitter tick. Yet, Cyabra can say with high certainty that all three are fake.

It’s Never Too Early for Disinformation

The bad actors behind this current bot campaign started sowing the seeds long before the 2024 elections – years before, actually. Cyabra’s research uncovered three massive bot farms that took part in social conversations regarding the elections: the first was created in April 2022, the second in October 2022, and the last in November 2022 – two whole years before the elections, and the very same month Trump announced that he would be running for the presidency again in 2024.

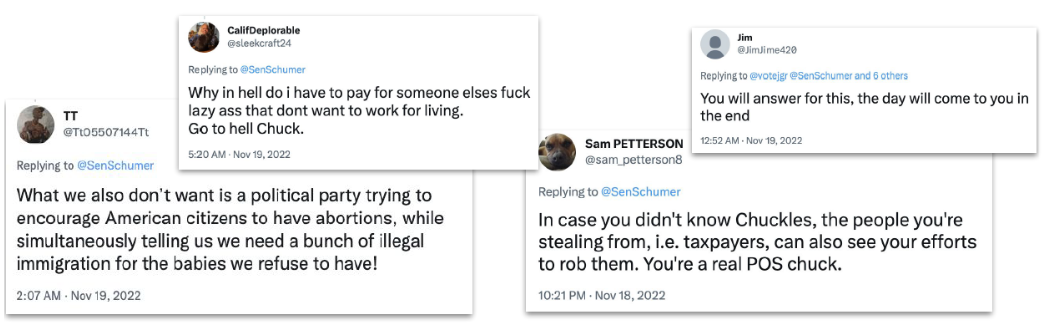

All the bots that Cyabra uncovered were created in the US. Those bot networks were neither pro-Democrat nor pro-Republican: they were pro-Trump, and worked both to discredit the Democratic Party and splinter the Republican Party. Among the attacked politicians were Sen. Chuck Schumer, Rep. Kevin McCarthy, Rep. Hakeem Jeffries, Gov. DeSantis, Gov. Nikki Haley, and President Joe Biden. Some of the fake discourse didn’t even explicitly discuss Trump, but criticized his opponents while presenting opinions that lined with pro-Trump ideas.

The Bot-tomless Pit of Online Manipulation

While the average percentage of fake accounts participating in online conversations varies between 4% and 8%, in this current bots campaign, Cyabra uncovered an average of 20% to 50% fake accounts. An even higher number was seen surrounding some of the topics in the debate – for example, a huge 68% of fake profiles promoted the idea that Gov. DeSantis is more fitting as a vice president to Trump. This fake discourse created the illusion of a widespread popular sentiment supporting a Trump/DeSantis run in 2024, but when Cyabra removed the fake profiles from the equation, authentic sentiment proved this idea was in a much smaller consensus than it seemed.

Another enormous number of bots discussed Senator Chuck Schumer and his immigration policy, spreading negative sentiment and criticizing Schumer. Cyabra’s analysis found 95% of the profiles discussing those topics were fake.

A similar number (94%) of fake profiles harshly criticized Senator McConnell, one of Trump’s most outspoken critics in the Republican party. The fake profiles claimed McConnel was a “traitor” to the Republican Party, creating the false impression of extensive criticism towards McConnell – which again proved significantly less dominant when fake profiles were removed from the conversation.

Read the second part of this story, where we delve into another popular topic in the election 2024 discourse: President Biden’s and former President Trump’s mishandling of classified documents, and which of those allegations is treated with much more severity in online discourse. We’ll also be comparing the amount of fake activity surrounding elected officials from different sides of political map.

______________

Cyabra is a social threat intelligence company, uncovering threats to your company, product, people, and places by exposing malicious actors, disinformation, and bot networks online. Contact us for more information or to set up a demo