The explosion of generative AI has brought many benefits but has also created humanity-changing risks.

With the rising use, the anxiety of employees around the world escalated. Marketing teams, content writers, graphic designers, and even developers admitted to being intimidated by the speed, ease of use, and high quality of the work of GenAI.

We were afraid of AI, but for the wrong reasons.

The Real Problem with Gen-AI Tools

GenAI won’t develop consciousness and try to destroy humanity. It won’t take our jobs, at least not anytime soon. It does spread misinformation and makes up fake sources.

In the last few weeks, we have witnessed misinformation from large language models in the form of “hallucinations” and fake news websites publishing hundreds of AI-generated articles a day (including the death of President Biden).

The World Health Organization has warned against misinformation in the use of AI in healthcare, saying, “data used to train AI may be biased and generate misleading or inaccurate information”.

But, the real reason to be wary of AI is not, in fact, AI itself. It’s those who use it.

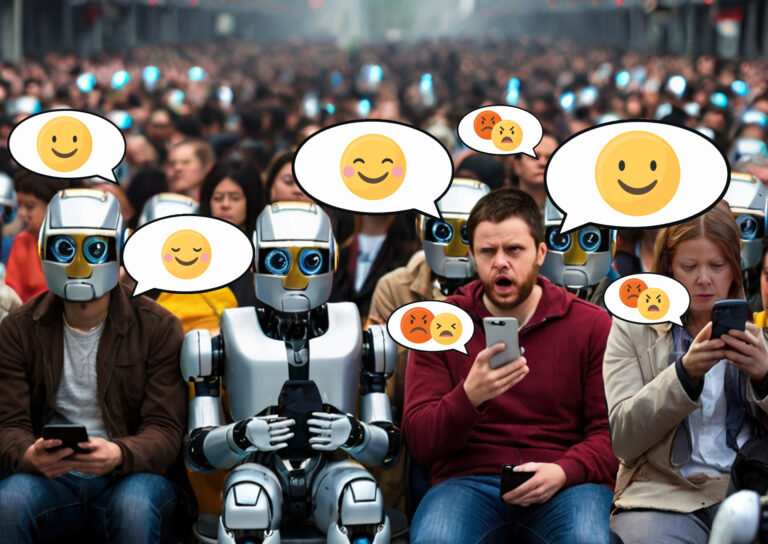

Malicious actors are creating bot networks to manipulate social discourse, spread fake news, and create confusion, alarm, and mistrust. Their utilization of GenAI tools significantly enhances their efficiency.

In the hands of someone trying to manipulate conversations on social media, it’s an effective and malicious weapon, and one we need to actively protect ourselves against.

The dangers of GenAI continue to mount with the number of fake social media accounts and online disinformation increasing exponentially. For users of social media like you and me, it begs the question: How do you know if what you’re reading is real, fake, or intended to do harm?

Using AI to Identify AI Is the Future

When Cyabra was established, we had a mission: to fight disinformation and make social media and the online sphere safer. As a company, we watched the misuse of GenAI tools by bad actors with growing concern, and have decided to act.

Today, we are introducing our solution, a new tool developed to solve the challenge of GenAI-created content: botbusters.ai.

Botbusters.ai is a free-to-use service able to detect AI-generated texts or images, as well as fake profiles, all in one place.

Society’s ability to differentiate between materials created by humans and those created by AI will play an essential role in our future security and cyber health.

Automobiles led to car safety, the internet led to the cybersecurity industry, and cloud computing led to cloud-based security systems. Software that creates a problem can also provide the solution.

Cyabra has created botbusters.ai to help bring trust back to the online realm. Try out botbusters.ai now, and help us spread the word to create a safer, more trustworthy digital sphere.

Your move, AI.

(Looking for inspiration? Try this AI-generated text or AI-generated image).