The Invisible Hand: How Synthetic Social Media Campaigns Are Reshaping Corporate Reality

With nearly 12 years on both sides of influence operations, Cyabra CPO Yossef Daar breaks down how synthetic campaigns move markets and destroy reputations.

With nearly 12 years on both sides of influence operations, Cyabra CPO Yossef Daar breaks down how synthetic campaigns move markets and destroy reputations.

Introducing a new way to analyze news at scale: separating verified claims from narrative distortion to understand what’s really being said.

Cyabra analyzed coordinated online activity surrounding the ongoing conflict with Iran, uncovering a large-scale influence operation that generated over 145 million views across major social media platforms in under two…

Cyabra’s 2026 rebrand marks its evolution from a detection-first startup to a mission-critical infrastructure provider, shifting the industry focus from uncovering disinformation to restoring global trust through evidence-led clarity.

Cyabra’s Narrative Alerts is an AI-powered early warning system that detects and neutralizes coordinated disinformation and harmful online narratives in real time to protect brand reputation and public trust.

Cyabra’s experts share the most compelling articles and investigations they’ve read this month, from the rise of AI-driven "digital fog" to the global collapse of trust. Read the latest on…

While nationwide protests in Iran have surged in the past month, Cyabra has conducted a deep analysis of online discourse to uncover the forces seeking to influence the grassroots protests…

Cyabra and Carahsoft have partnered to provide U.S. Public Sector agencies with streamlined access to AI-powered solutions for detecting disinformation and countering influence operations in real time.

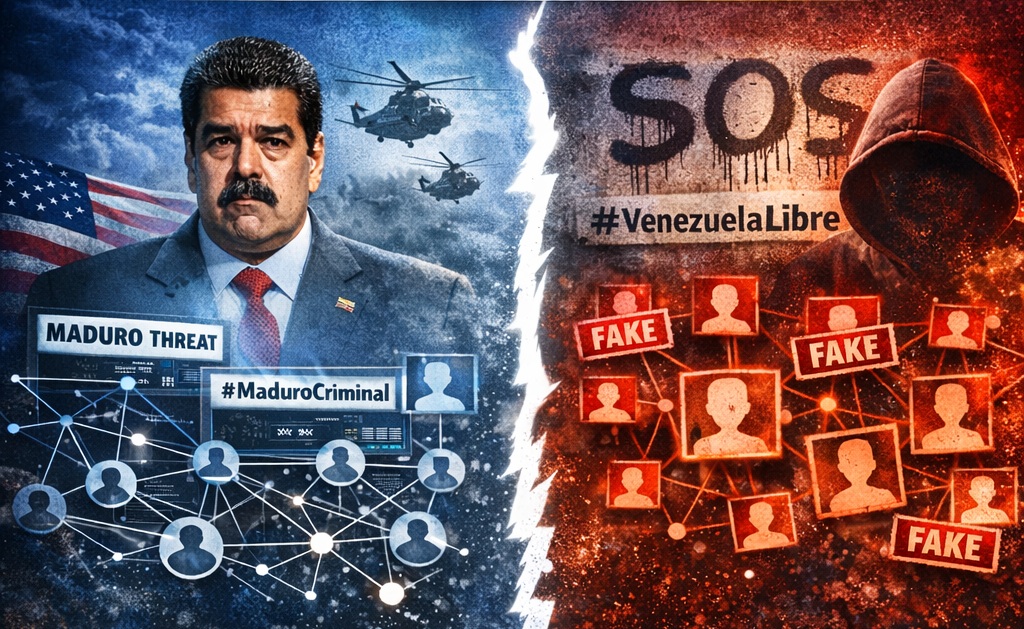

Cyabra uncovered how fake profiles executed a three-phase campaign that reversed public perception of the US-Venezuela operation within just 6 hours, with 23% of profiles identified as inauthentic.