Cyabra conducted two investigations into coordinated online activity surrounding the ongoing conflict with Iran, covering the period from February 28 to March 23, 2026. Across both, a consistent picture emerged: a large-scale influence operation combining AI-generated deepfakes, networks of inauthentic accounts, and synchronized posting patterns to shape global perception of the war while the fighting was still ongoing. This is what we found.

- Cyabra identified a coordinated influence campaign generating over 145 million views across X, Facebook, Instagram, and TikTok in under two weeks

- 19% of accounts analyzed were inauthentic, amplifying AI-generated deepfakes and fabricated war footage at scale. TikTok was the source of 72% of total campaign views (over 105 million)

- Three core narratives drove the first wave of the campaign. A second wave shifted focus to manufacturing the perception of American failure, reaching 40 million additional views

- Coordination indicators including synchronized posting, reused content, and fixed hashtag clusters point to a centralized operation with links to previous Iran-aligned campaigns

Pro-Iran Campaign Built Around Three Narratives

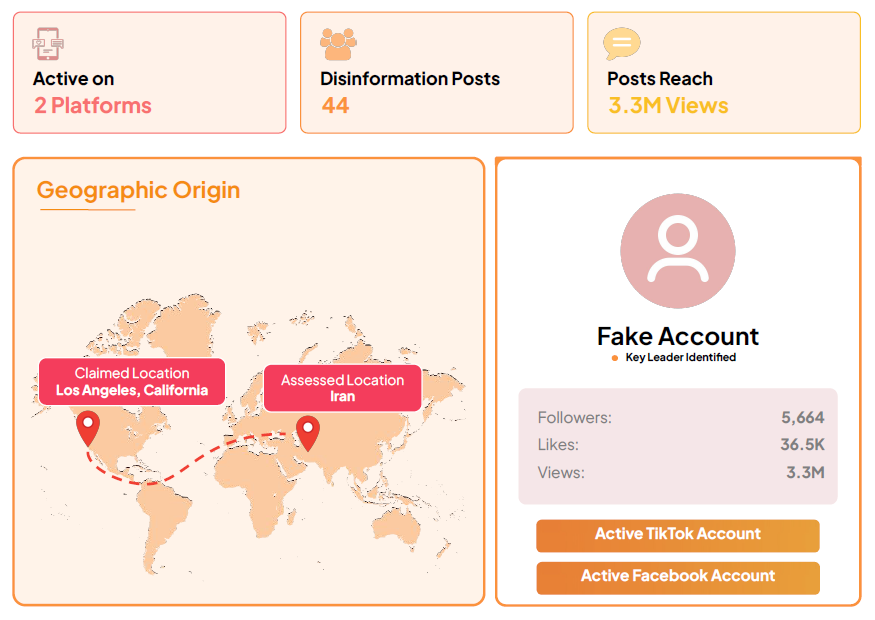

In the first investigation, Cyabra analyzed social media activity across X, Facebook, Instagram, and TikTok between February 28 and March 7, 2026. 19% of accounts involved in the relevant online discourse were inauthentic, collectively generating and amplifying over 37,000 posts and reaching over 18 million potential views.

The content was structured around three core narratives, each advancing the same underlying message: that Iran is the dominant power in the conflict.

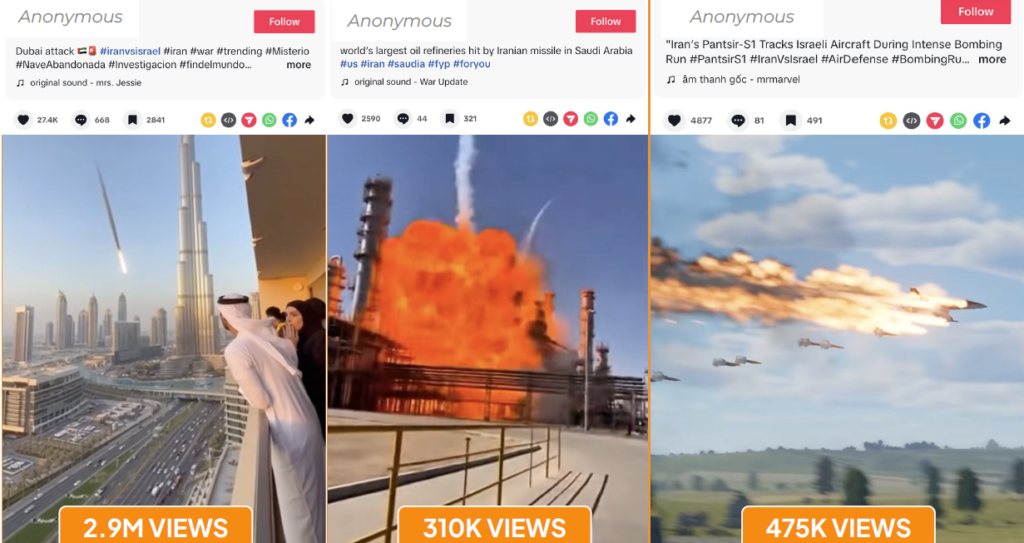

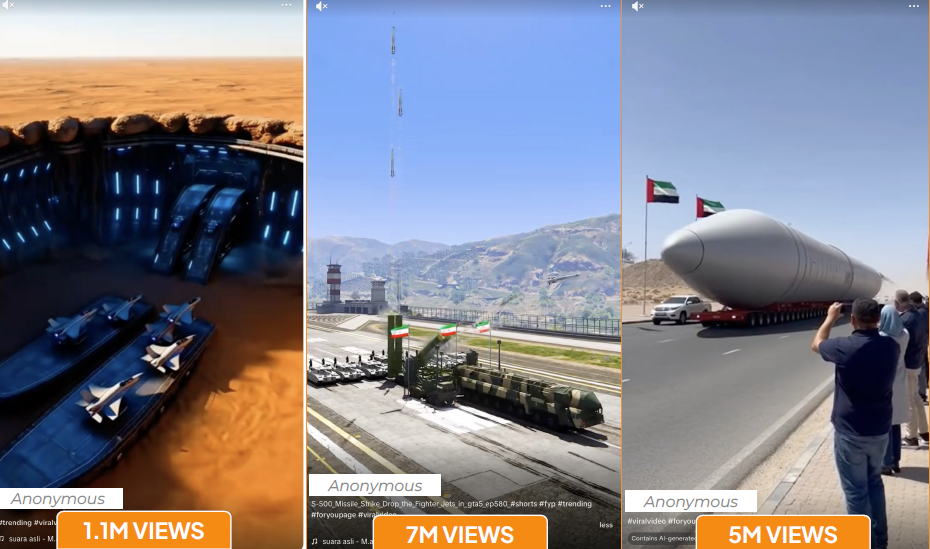

The first narrative portrayed Iran as successfully striking military targets across the region, including U.S. aircraft carriers, Israeli cities and military bases, the Burj Khalifa in Dubai, and Saudi oil refineries. Most of this content consisted of AI-generated deepfake videos depicting attacks that did not occur, or significantly exaggerating those that did. The most viral video from a single key account accumulated 2.9 million views on TikTok alone. Some videos included what appeared to be the original AI generation prompts in their captions, a technical artifact confirming they were synthetically produced.

The second narrative focused on projecting Iranian military and technological superiority: fabricated footage of stealth bombers resembling U.S. B-2 aircraft, underground weapons facilities, and advanced surveillance systems. One TikTok account identified as a key amplifier accumulated over 12 million views on posts featuring weapons of mass destruction imagery.

The third was the most revealing. On March 1, CENTCOM released footage of strikes on Iranian warplanes on a tarmac. Within hours, inauthentic accounts began circulating videos claiming the aircraft were painted decoys, suggesting U.S. forces had bombed runway markings rather than real planes. The speed and consistency of the response pointed to centralized production. The narrative served a dual purpose: minimizing reputational damage from military losses while framing adversaries as incompetent. Notably, a significant portion of content targeting U.S. military assets was distributed in Spanish, suggesting a deliberate effort to reach Latin American and Spanish-speaking audiences.

The Second Wave: Manufacturing American Failure

Cyabra’s second investigation, covering through March 23, focused on 47 inauthentic accounts spreading anti-American narratives. These accounts generated over 40 million views, more than 1.1 million likes, and nearly 93,000 shares.

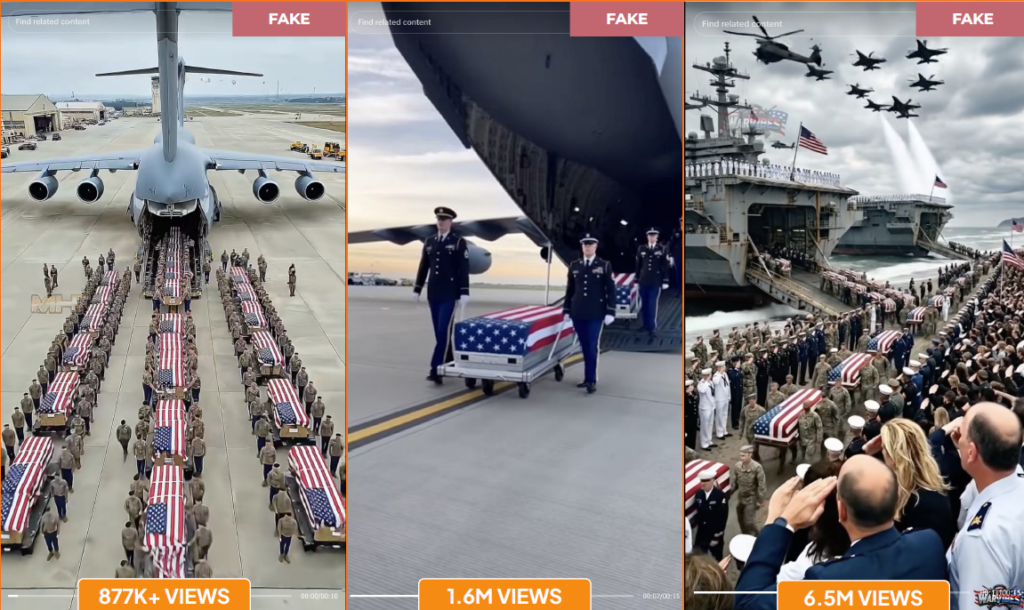

Rather than projecting Iranian strength, this phase focused on manufacturing the perception of American failure. Three themes dominated:

- Large-scale military funeral processions with flag-draped coffins, designed to convey the scale of American losses

- American soldiers in distress on the battlefield, simulating desperate pleas, grief over fallen comrades, and medical emergencies

- Children mourning the death or deployment of a parent in the U.S. military, leveraging themes of innocence and family separation to maximize emotional impact

Each theme was engineered for emotional impact. The funeral procession videos reached up to 6.5 million views individually. The children’s grief videos, the most widely circulated of the three, featured identical dialogue and visual staging across dozens of accounts, strongly suggesting content produced from a small set of centralized prompts.

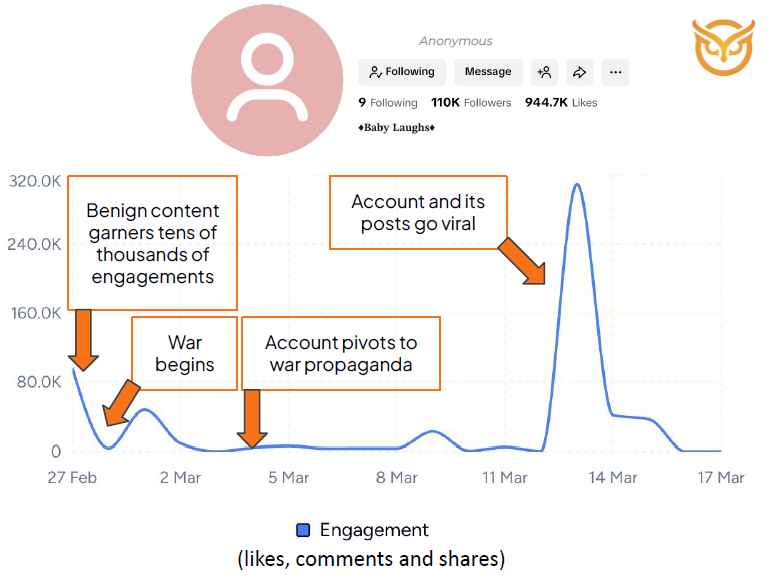

One key account exhibited a sleeper profile pattern: before the conflict, it had posted benign content featuring children, building a significant following. Once the conflict began, it pivoted abruptly to war-related content and generated 17 million views. A second account published seven videos, apparently from the same AI prompt, within a single minute on March 15, with inauthentic accounts then flooding the posts with coordinated comments to boost algorithmic visibility.

What Coordination Looks Like in Practice

Across both investigations, the same AI-generated videos and near-identical captions appeared across numerous unrelated accounts, indicating centralized content production. Fixed hashtag clusters including #standwithiran, #westandwithiran, #prayforiran, #khamenei, #iranwar, #israelterroriststate, and #usterroriststate, were used consistently to boost reach. On March 5, 1,053 posts were published at 07:00 UTC, followed by 1,191 more at 11:00 UTC, synchronized bursts inconsistent with organic behavior.

The hashtag #israelterroriststate was also heavily used in Iran-linked operations Cyabra documented following Iran’s April 2024 attacks. Recurring hashtags, the same narrative framing, and the same coordination patterns across separate events point to a sustained, ongoing information operation rather than a spontaneous response to current events.

TikTok was responsible for 72% of total campaign views, over 105 million, with Facebook and X accounting for most of the remainder. The platform’s short-form format and recommendation algorithm made it particularly effective for distributing AI-generated visual content at scale.

What the Evidence Tells Us

The pro-Iran influence campaign did not need to persuade everyone. It needed to reach enough people, across enough platforms, to shape how the conflict was perceived, particularly in the United States, where a significant volume of Spanish-language content targeting Latin American audiences was also concentrated.

By analyzing the actors involved, mapping the coordinated behaviors connecting them, and documenting the content patterns confirming centralized structure, decision makers can distinguish this kind of influence operation from organic online noise, and gain the evidence needed for response and mitigation.

Download the comprehensive research by Cyabra:

- Iran’s Coordinated Campaign: Deepfakes Used to Manipulate the War Narrative

- How Iran Uses AI-Generated Content to Manufacture the Illusion of American Military Failure